|

Program: Camden Youth Engagement Project (YEP), in Minnesota. Organization: The Cleveland, Lind-Bohannon, Shingle Creek and McKinley neighborhood associations in the Camden area of North Minneapolis; Community Education Programs at Jenny Lind and Lucy Craft Laney public schools; and the Hennepin County Research, Planning and Development Department. Types of Evaluation: Based on a developmental, participatory model that gathered data through youth and adult consensus workshops, community event surveys, youth participant surveys and interviews with YEP crew leaders. Sample Sizes: Nine youths and six adults attended consensus workshops; 46 surveys were collected at community events; 23 youth participants completed surveys; and eight crew leaders were interviewed. Evaluation Period: May 2007 to May 2008 (Phases 3 and 4). Cost: About $20,000 in dedicated staff time, but no official cost has been calculated. The total cost of the program was about $75,000, including in-kind contributions from partners. Funded By: No money set aside for evaluation. Hennepin County provided one staff evaluator. Contact: Rebecca Gilgen, Hennepin County Research, Planning & Development (612) 879-3583. Search for “Community Youth Action Crew” at www.co.hennepin.mn.us. |

|

|

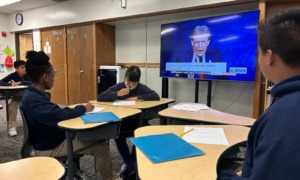

Gilgen: The youths “don’t realize it’s amazing that the mayor is making decisions based on their program.” |

Perhaps you think your program is doing pretty well when it comes to youth engagement. Maybe you have a youth on your board of directors, or you seek out youth voices for decision-making about rules or curriculum.

Now consider the Camden, Minn., Youth Engagement Project (YEP) and its two new replication sites. Not only are youth responsible for the program’s day-to-day activities, but they participate as full partners in evaluation design, data collection, data analysis and the presentation of findings to funders and decision makers.

“It has made things more difficult,” said Rebecca Gilgen, senior planning analyst for Hennepin County’s Research, Planning & Development Department, a YEP partner. “Any time you increase the number of voices you need for consensus before you move on, it gets harder. … But it’s invaluable.”

Gilgen said that when researchers conduct evaluations of youth programs without first consulting the youths about what to ask, “We’re creating an evaluation that we say is valid and reliable, but it’s not – because it’s only valid and reliable from an adult perspective.”

Youth Crew Model

YEP was launched in early 2006 in response to a call by the Minneapolis mayor and police chief to fight the causes of delinquency. A partnership of local schools, neighborhood associations and Hennepin County – funded in part by Wells Fargo Bank, the McKnight Foundation and the Minneapolis Public Schools – launched a local initiative based on the Youth Action Crew model.

That model, developed by the Youth Work Institute at the University of Minnesota, is an asset-based youth engagement approach that focuses on training youth to document, disseminate information about, and influence positive activities and resources in their communities.

YEP recruited teen leaders, who in turn recruited crews of 13- to 16-year-olds within their own neighborhoods. Each youth got a stipend of around $100 a month, based on a minimum four-hour workweek.

YEP’s programming unfolded in four stages. In Phase 1, crews assessed youth interest in out-of-school-time activities by interviewing neighborhood youth and program providers. In Phase 2, youth conducted face-to-face surveys with community leaders, organizations and businesses and mapped the resources available to youth in targeted neighborhoods. They also developed a marketing campaign to increase awareness of the maps and the availability of the identified resources.

An evaluation at the end of Phase 2 found that YEP had “created a positive change in attitudes and behaviors in both the youth and adults who participated.” Both age groups said they had learned to work collaboratively; adults became more willing to hear youth voices and view them as leaders; and the youth said they felt more confident in their abilities and a new willingness to be civically involved.

In Phase 3, YEP youth and adults worked at building community consensus and support around their efforts, and promoted the development of new resources. By then, the youth began asking, “What difference does this project really make?”

In Phase 4, they tried to answer that question.

New Tools for Measurement

The change from evaluating a process and the quality of participation to evaluating hard outcomes made the second evaluation more complicated. Gilgen said the group decided, “If we’re doing marketing, what we can expect to find is an increase in awareness. So we said, ‘Let’s measure that.’ ”

Money constraints and YEP’s dedication to youth engagement forced innovations in the evaluation design, resulting in methods that were low in cost and high in youth participation and buy-in. Among them:

• Cluster or systemized samples

YEP didn’t have the resources for a random sample of Camden residents. So the crews circulated at local community events, such as the North Minnesota Housing Fair, interviewing every third person about their awareness of YEP and its products.

“It’s by no means the gold standard, but the most important part was to begin to understand if we were making any kind of impact,” Gilgen said.

• Consensus groups

Instead of using traditional focus groups, YEP gathered data from youth and adult participants in consensus groups. In focus groups, evaluators have to read and code participants’ answers into theme areas. In consensus groups, the participants themselves cluster their responses under themes – virtually eliminating researcher bias and reducing analysis time, according to Gilgen.

• Survey Monkey

At the two replication sites in Brooklyn Park and Richfield, Minn., youth crews used Survey Monkey – which allows users to create and launch an online survey of up to 10 questions for free – to analyze the handwritten Phase 2 mapping surveys they had collected through interviews in their communities.

“They entered 1,000 handwritten surveys onto the Internet. After every 100 to 200, they were able to click a button and see the results,” Gilgen said. “It kept the motivation going.”

Outcomes

The evaluation of Phases 3 and 4 showed continued positive impacts of YEP on its youth and adult participants, as well as increased awareness of the program. It also offered proof to funders that the initiative was “delivering on its promises” to host events and distribute maps, Gilgen said.

Among YEP’s findings:

• Crews distributed more than 1,500 maps at 13 community events.

• Fifty-seven percent of north Minneapolis residents surveyed at community events said they were aware of the Camden map of youth assets.

• More than one-quarter of surveyed residents said they would keep the map to help find youth-friendly places or would post or display the map in their homes or businesses.

• Nearly 90 percent of those surveyed at community events said they would attend a YEP event.

• Crews produced 10 movie nights and two talent shows, attended by an average 20 and 35 youths per night, respectively.

• Crews raised nearly $2,000 through fundraising and grant-writing activities.

• YEP gained a new fiscal sponsor.

Gilgen said the work instilled confidence in the youth, the program and funders and as far as measuring community impact goes, Gilgen summed it up this way:

“The youth are so involved in the tasks … they don’t realize it’s amazing that the mayor is making decisions based on their program. When they turn that corner and see the impact, and are standing in front of the mayor, they begin to see a much larger identity for themselves in their community.

“And that’s a huge shift … that has less to do with the young person, and more with what the community expects from a young person.”